Infrastructure

Compute, networking, storage, and private services across the Mac Mini M4, GB10, Pi nodes, and supporting systems.

This work ties together local infrastructure, model-serving, automation runtimes, and operator-facing apps. The through-line is simple: build systems that are useful under real conditions, not just interesting in isolation.

Running across a Mac Mini M4 control plane, a Dell GB10 for local inference, and a cluster of Raspberry Pi nodes.

The projects here are connected. Infrastructure supports the runtime, the runtime routes work across the stack, and the applications sit on top of that foundation as things I actually use day to day.

Compute, networking, storage, and private services across the Mac Mini M4, GB10, Pi nodes, and supporting systems.

Schedulers, model-serving, health checks, workflows, and the automation surface that keeps things moving.

Local models, routing logic, agent orchestration, and tool-enabled execution across the environment.

Practical tools built on top of the system: dashboards, OCR workflows, decision support, and creative utilities.

These are the systems everything else depends on. If these layers stop working, the rest of the stack does too.

This is the orchestration layer that keeps the environment operational — coordinating recurring jobs, health checks, alerts, and agent-triggered workflows across the stack.

Routing, orchestration, coordination, and system glue.

Local model serving, heavier workloads, and AI execution.

Always-on utilities, supporting apps, and private infrastructure.

Status surfaces, workflows, tools, and the public-facing layer.

This is the foundation underneath everything else — local compute, networking, storage, and the private environment that makes experimentation reliable instead of fragile.

This is the AI layer that makes local models operational — routing work, invoking tools, and handling real multi-step workflows across machines.

This is where the system becomes tangible — applications that consume shared infrastructure, automation, and local AI capabilities to solve real problems in day-to-day workflows.

A curated set of operator-facing applications built on top of shared infrastructure, automation, and local AI — spanning sales intelligence, monitoring, OCR workflows, creative tooling, and personal automation.

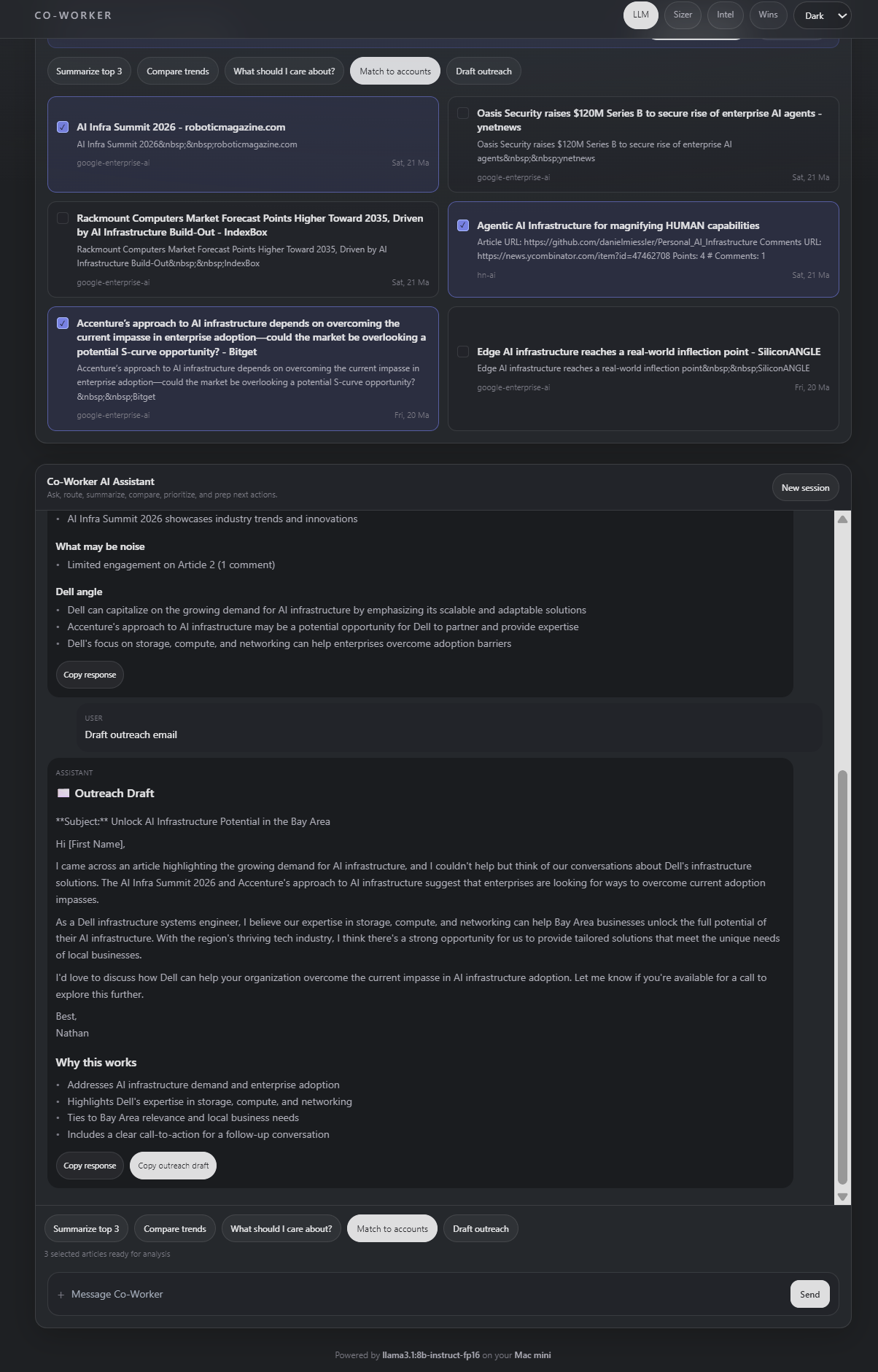

AI-powered sales intelligence system that turns daily signals into account insights, prioritization, and actionable outreach workflows.

Active System (v1 Live)

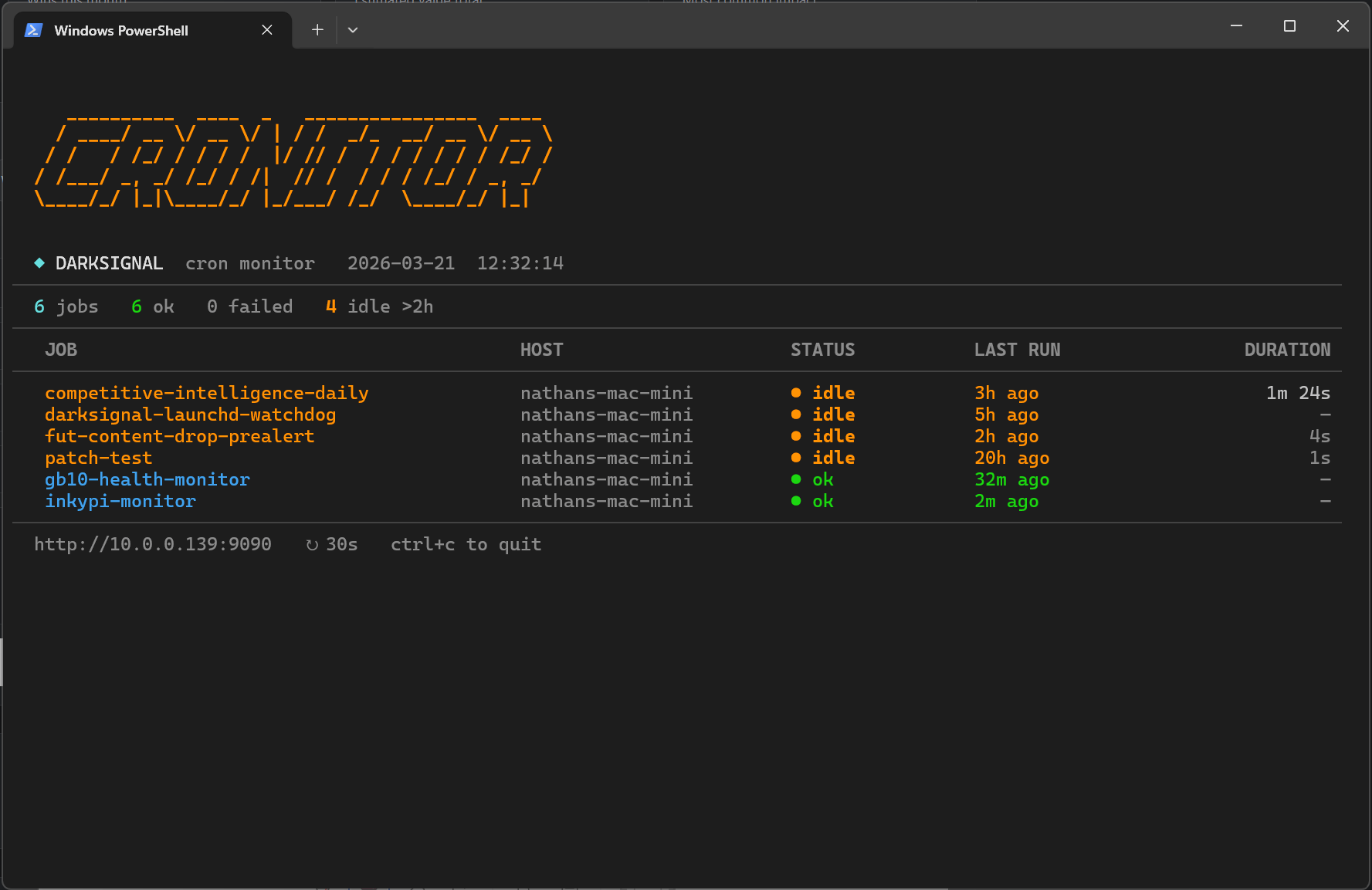

Runtime monitoring system for tracking job execution, failures, and automation health across distributed workflows.

Active System (v1 Live)

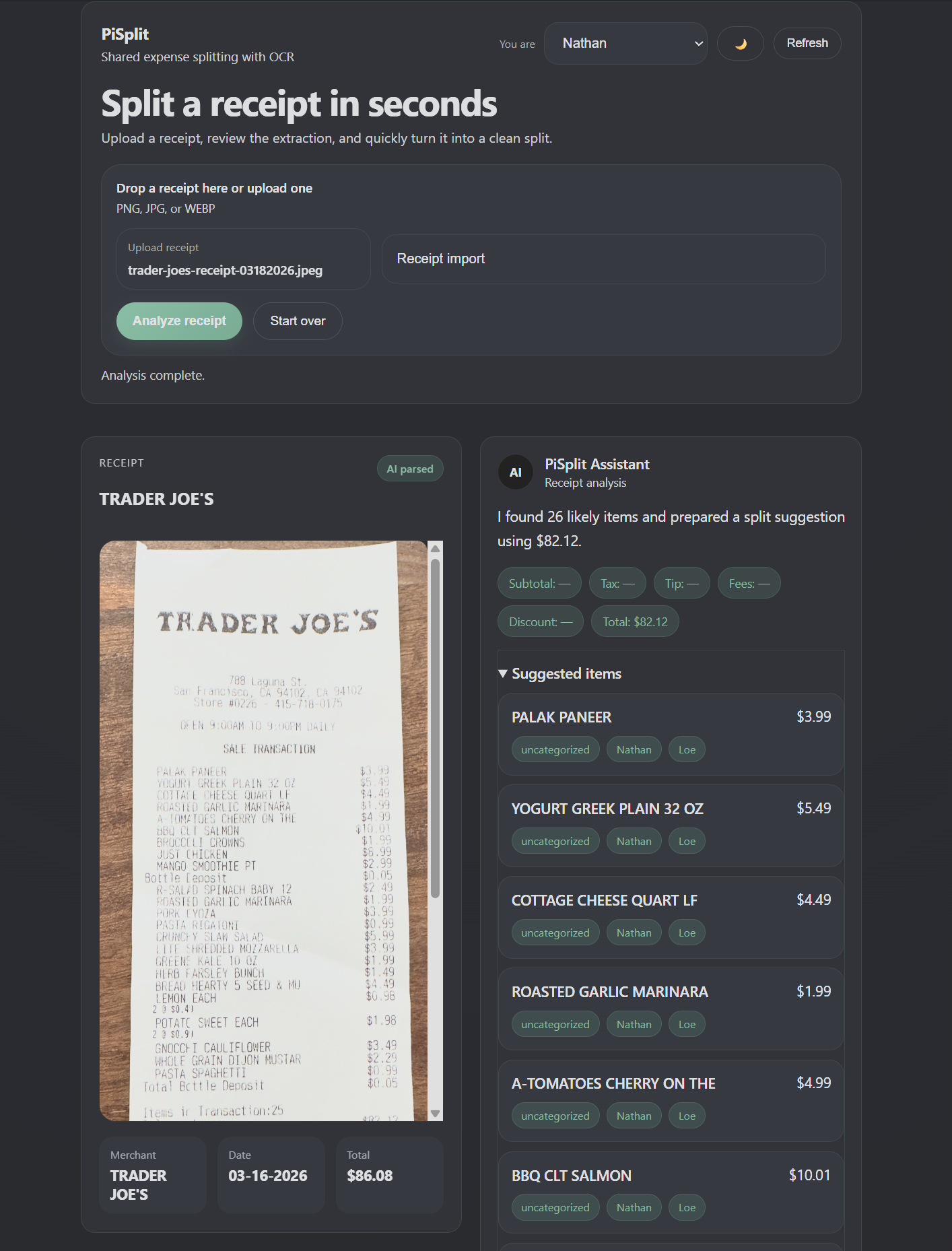

OCR-driven expense workflow that converts receipts into itemized splits, balances, and reimbursement tracking.

Active System (v1 Live)

Local-first image triage workflow for rapidly reviewing, selecting, and preparing photos for editing.

Active System (v1 Live)

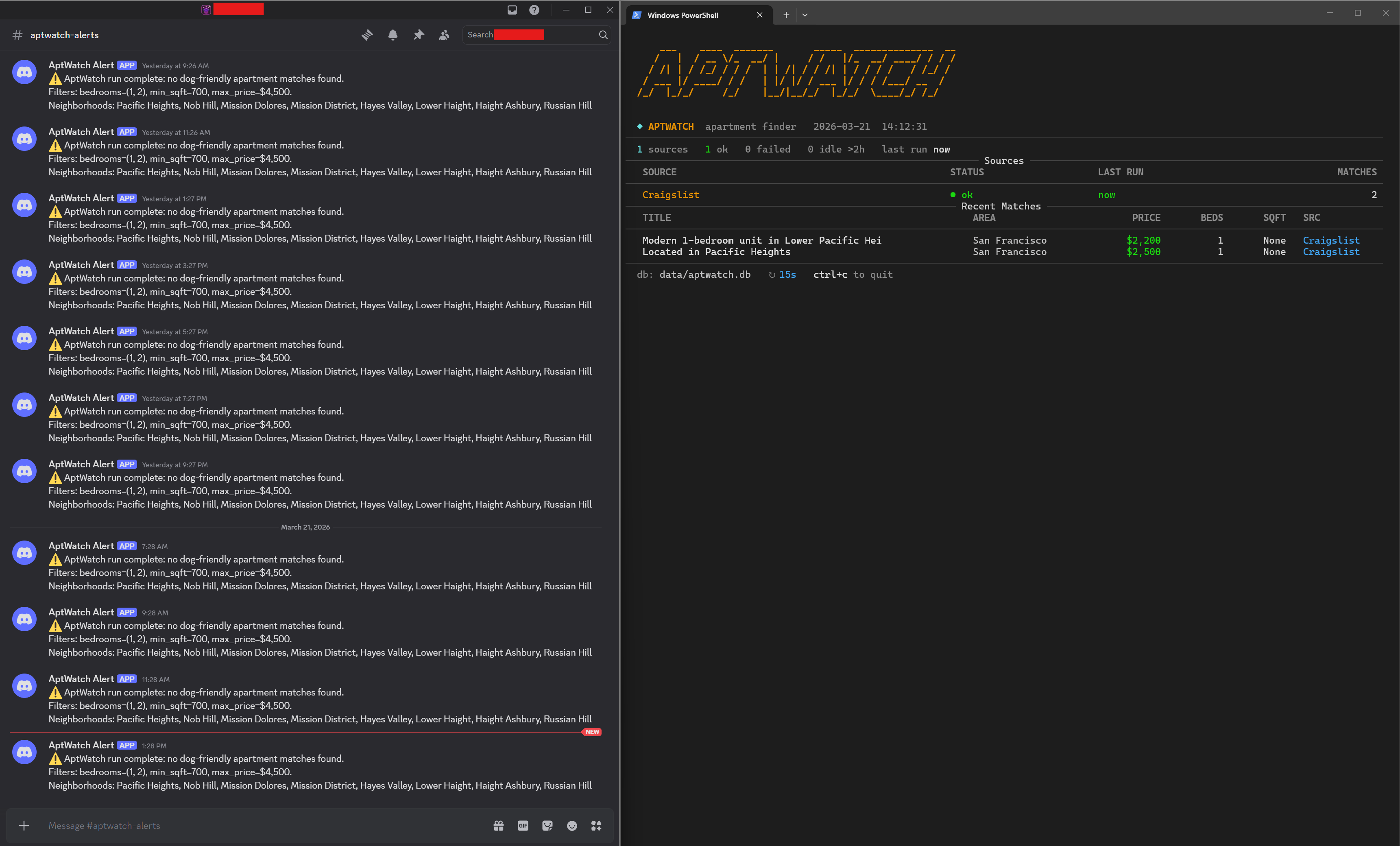

Automated listing pipeline that collects, filters, and ranks apartments based on real-world search criteria.

A broader view of the infrastructure, model-serving, and supporting systems that make the application layer possible.

Compute, orchestration, model delivery, and reliability foundations that power every downstream system.

Agent runtimes, model routing, and intelligence services that turn models into practical operators.

This work reflects how I approach systems engineering: designing real infrastructure, running local AI, and building tools that are actually used.

Let’s talk